Solutions to exercises

Source: Gray (2004) . Sec. 3.18, p. 54-55.

Exercise 54:

a)

c = 1-p

b)

P\{W=0\} = \sum_{k=0}^\infty P\{W=0| N=k\} P\{N=k\}

= (1-p) \sum_{k=0}^\infty \left(\dfrac{p}{2}\right)^k

= \dfrac{1-p}{1-p/2} \\

P\{W=1\} = \dfrac{p}{2-p}

c)

P\{N=k | N<10\} = \left[

\begin{array}{ll}

\dfrac{1-p}{1-p^{10}} p^k, & 0 \le k < 10 \\

0, & k \ge 10

\end{array}

\right.

Exercise 55:

a)

P_{Y_n}(k) = \left[

\begin{array}{ll}

(1 - p) (1-\epsilon) + p \epsilon & k=0, \\

p (1-\epsilon) + (1-p)\epsilon & k=1

\end{array}

\right.

b)

Yes, it is a Bernoulli process.

c)

P_{Y_n|X_n}(j|k) = \left[

\begin{array}{ll}

1-\epsilon & j=0, k=0, \\

\epsilon & j=1, k=0 \\

\epsilon & j=0, k=1 \\

1-\epsilon & j=1, k=1

\end{array}

\right.

d)

P_{X_n|Y_n}(j|k) = \left[

\begin{array}{ll}

\dfrac{(1-p)(1-\epsilon)}{(1-p)(1-\epsilon)+p\epsilon} & j=0, k=0, \\

\dfrac{p\epsilon}{(1-p)(1-\epsilon)+p\epsilon} & j=1, k=0 \\

\dfrac{(1-p)\epsilon}{p(1-\epsilon)+(1-p)\epsilon} & j=0, k=1 \\

\dfrac{p(1-\epsilon)}{p(1-\epsilon)+(1-p)\epsilon} & j=1, k=1

\end{array}

\right.

e)

P\{Y_n \neq X_n\} = P\{W_n = 1 \} = \epsilon

Source: Beichelt, F. (2016). (Chapter 8, p. 376 and Chapter 10, p. 492)

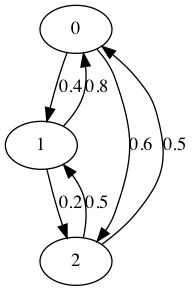

Exercise 8.1:

(1)

P\{X_2 =2| X_1 =0, X_0 =1\} = P\{X_2 =2| X_1 =0\} = p_{02} = 0.5

P\{X_2 =2, X_1 =0 | X_0 =1\}

= P\{X_2=2| X_1=0, X_0=1\} P\{X_1=0| X_0=1\}

= p_{02} p_{10} = 0.2

(2)

P\{X_{n+1}=2, X_n=0 | X_{n-1}=0\} = P\{X_2=2, X_1=0 | X_0=0\} = p_{02} p_{00} = 0.25

(3)

P\{X_1=2\} = 0.4 p_{02} + 0.3 p_{12} + 0.3 p_{22} = 0.5 \\

P\{X_1=1, X_2=2\}

= p_{12} P\{X_1=1\}

= 0.4 (0.4 p_{01} + 0.3 p_{11} + 0.3 p_{21}) = 0.072

Exercise 8.2:

(1)

\mathbf{P}^{(2)} = \mathbf{P}^2 = \left(\begin{array}{ccc} 0.58 & 0.12 & 0.3 \\ 0.32 & 0.28 & 0.4 \\ 0.36 & 0.18 & 0.46 \end{array} \right)(2)

P\{X_2=0\} = 0.42

P\{X_0=0,X_1=1,X_2=2\} = P\{X_0=0\}\cdot p_{01} \cdot p_{12} = 0

Exercise 8.3:

(1)

P\{X_3=2\} = (0, 0, 1) \left(\mathbf{P}^3\right)^{\intercal} (0.4, 0.4, 0.2)^{\intercal} = 0.2864(2)

(3)

\boldsymbol{\pi} = \left(\begin{array}{c} 0.3947 \\ 0.3070 \\ 0.2982 \end{array}\right)Exercise 8.4:

It is not a Markov process. For instance:

p_{X_3|X_2,X_1}(1|0,1) = 0

but

p_{X_3|X_2}(1|0) = \dfrac{1}{4}

Exercise 8.5:

(1)

(2)

\boldsymbol{\pi} = \left(\begin{array}{c} 0.25 \\ 0.25 \\ 0.25 \\ 0.25 \end{array}\right)Exercise 10.1

(1)

\mathbb{E}\{X_{n+1} | X_0,\ldots, X_n\} = \mathbb{E}\{Y_{n+1}^2 + X_n | X_0,\ldots, X_n\} = \mathbb{E}\{Y_{n+1}^2\} + X_n > X_n \qquad \Rightarrow \qquad It is not a martingale. It is a submartingale.

(2)

\mathbb{E}\{X_{n+1} | X_0,\ldots, X_n\} = \mathbb{E}\{Y_{n+1}^3\} + X_n = X_n \qquad \Rightarrow \qquad It is a martingale.

(3)

\mathbb{E}\{X_{n+1} | X_0,\ldots, X_n\} = \mathbb{E}\{|Y_{n+1}|\} + X_n > X_n \qquad \Rightarrow \qquad It is a submartingale.

Exercise 10.2

\mathbb{E}\{X_{n+1} | X_0,\ldots, X_n\} = \mathbb{E}\{Y_{n+1} - \mathbb{E}\{Y_{n+1}\}\} + X_n = X_n \qquad \Rightarrow \qquad It is a martingale.

Ex. 10.3

\mathbb{E}\{X_{n+1} | X_0,\ldots, X_n\} = \frac{T}{2} X_n \qquad \Rightarrow \qquad- It is a martingale for T=2.

- It is a submartingale for T \ge 2.

- It is a supermartingale for 0 < T \le 2.

Ex. 10.4

\mathbb{E}\{X_{N-1}\} = -\infty

Ex. 10.6

(1)

The winnings after losing 5 games and winning the 6th one are € 122

(2)

Yes, it is a martingale

Ibe, 2013, Pages 29-48

Ex. 2.12:

(a) For p=\dfrac{1}{2} it is a martingale.

(b) For p \ge \dfrac{1}{2} it is a submartingale

(c) For p \le \dfrac{1}{2} it is a supermartingale

Ex. 2.13.

TBD

Ex. 2.14.